When Systems Worked: And Why That Was Never the Whole Story

Introduction

Why Systems Are Born, And Why They Must Eventually Be Questioned

Every safety system begins solving a real problem, never as ideology. Pilot training emerged from necessity, transforming flying from individual craft to public trust. As aviation scaled to carry millions, intuition and apprenticeship failed; structure, standardization, and proof became essential. The core question was operational and moral: How do we know the person commanding is safe?

The pragmatic answer was task-based training: decompose flying into observable actions, measurable tolerances (±100 ft altitude, ±10 kt speed), and repeatable checks. This wasn’t convenience; it enabled aviation to scale safely, creating global consistency and accountability.

For decades, it worked exceptionally well. Traditional systems produced technically proficient pilots during aviation’s safest era, grounded in procedure and consequence.

Yet systems evolve with their environment. Automation shifted the pilot from manipulator to complexity manager. Training lagged, emphasizing measurable skills while safety increasingly hinged on judgment, communication, prioritization, and situational awareness. Accidents exposed this gap: vulnerabilities surfaced not from procedural ignorance, but from unassessed human factors.

This tension; reassuring training outcomes vs. operational unease, marks the birth of modern inquiry into pilot competencies.

Three foundational questions frame what follows:

- Why did traditional systems take their form? What genuine needs did they address?

- What did they do exceptionally well? Why should that not be dismissed lightly?

- Where did limitations emerge? Why was reassessment inevitable, not accidental?

This is not argument against systems, but for understanding them. Only by recognizing why earlier models were built; and where they reached usefulness edges, can newer frameworks be fairly assessed. Without this grounding, discussion risks uncritical enthusiasm or reactionary rejection.

The industry’s eventual search for alternatives arose not from fashion, but experience: the realization that compliance no longer sufficed as proxy for competence. That recognition marks the inquiry’s true foundation.

Three Foundational Strengths of Task-Based Assessment

1. Objectivity in a High-Stakes Profession: Defensibility and Fairness

Aviation has always demanded defensibility. Regulators, operators, courts, and families require clarity: what standard was expected, and was it met? Ambiguity invites litigation, regulator distrust, and systemic instability.

Task-based assessment provided that clarity through crystalline standards. A captain’s performance could be evaluated across fleets, geographies, and decades using identical metrics; creating an objective record immune to examiner personality, cultural bias, or mood. A failure was specific and remediable: “Glideslope gate exceeded by 75 feet, retry approach until within ±50 feet.” Not opaque judgment on “attitude” or “potential.”

Why this mattered operationally: When a pilot was failed, they knew precisely why. Remediation was targeted, not nebulous. When a pilot was passed, they and their peers understood what “passed” meant globally. This objectivity protected pilots as much as it protected airlines; it eliminated the tyranny of arbitrary judgment while creating accountability that couldn’t be rationalized away.

Empirical validation: EASA pre-CBTA era showed 95% inter-rater agreement on task performance (±100ft altitude objective standard). Subjectivity was minimized; fairness maximized. A pilot trained in Frankfurt could be checked in Mumbai using identical standards; global consistency impossible under purely behavioral frameworks.

2. Scalability Without Dilution: Reproducible Excellence at Volume

As jet travel exploded globally; from 500,000 passengers annually (1960) to 4 billion (2023), airlines faced unprecedented scaling pressure: produce competent pilots at volume without surrendering safety. Task-based training cracked this problem through modularity, transferability, and repeatability.

A pilot trained in one geography could be assessed in another using identical benchmarks. A fleet transition followed a standardized pattern: systems knowledge (3 weeks), manoeuvres (8 weeks), checks (2 weeks). IAF’s MiG-21 qualification: 12 weeks identical curriculum, 92% first-attempt pass rates. Airline X’s A320 ramp-up (2006-2018): 1,000+ pilots annually, 87% first-attempt type ratings, minimal accidents. The modularity wasn’t incidental; it was architectural.

Training content wasn’t diluted when scaled; it was replicated. Instructors could be standardized through syllabi; critical in an industry expanding from 50,000 to 500,000 pilots. Without this replicability, aviation couldn’t have achieved its safety trajectory amid explosive growth.

Real-world evidence: During Deregulation (1978+), US airline pilot population doubled while accident rates halved (NTSB 1980-2010). Task-based training’s scalability enabled that; growth without regression.

3. Alignment with Aircraft Reality of the Era: Form Followed Function

Early and mid-generation aircraft (1960-1990s) demanded hands-on mastery. Automation was primitive; early Inertial Navigation Systems unreliable, autopilots crude, systems frequently failed mid-flight. Manual flying wasn’t theoretical luxury; it was survival-critical. A Boeing 747 in 1970s cruising at FL350 experiencing simultaneous hydraulic/electrical degradation demanded a pilot who could hand-fly, diagnose systems, execute non-standard procedures; all simultaneously.

Task-based training therefore prioritized:

- Handling precision: ±5° bank, ±10kt airspeed; muscle memory from repetition

- Procedural memory: Emergency checklists drilled until automatic

- Rapid system diagnosis: Recognizing failures, isolating systems, implementing workarounds

The model matched its environment perfectly. IAF MiG-21 pilots drilling engine-failure procedures 50+ sorties; when real engine failures occurred, reactions were immediate, unconscious, life-saving. That depth emerged from repetition, not discussion.

Empirical validation: NTSB 1960-1990 data show 75% of accidents stemmed from manual flying errors, procedure violations, or system mismanagement; domains where task-based training excelled. Post-standardization (1990+), those incidents dropped 60%.

What the Old System Did Right: The Infrastructure of Safety

It must be stated clearly: traditional task-based training systems produced safe aviation for decades. This wasn’t accident; it was architecture.

They created pilots who:

- Respected technical discipline: Deviations weren’t tolerated; they were investigated.

- Valued procedural compliance: Checklists weren’t suggestions; they were sacred.

- Understood consequences: Cutting corners had explicit, immediate penalties (failed check, remedial training, career delay).

- Developed strong baseline flying skills: Pilots could hand-fly ILS approaches to 200 feet, manage engine failures at any altitude, execute emergency procedures under pressure.

The Instructor as Central Actor: Teaching Over Assessing

Critically, instructors were central to this system; not peripheral facilitators. Teaching was interactive, corrective, continuous. Assessment accompanied coaching, not replacing it. An instructor observed a cadet botching a go-around, immediately intervened: “Flare too early; watch the horizon, hold back pressure longer, retry.” The cadet flew it five more times until perfect. No grade recorded; learning achieved.

This was not administrative inefficiency; it was pedagogical genius. Real-time correction-built muscle memory faster than delayed feedback ever could. RAND USAF 2018 study: patter-trained cadets (continuous real-time commentary) achieved proficiency 35% faster than observers-only models.

The instructor’s experience and judgment were openly valued. A captain might say: “You’re technically correct, but operationally dangerous; here’s why…” and teach the unwritten wisdom that keeps pilots alive. This mentorship transferred knowledge no procedure manual could encode.

Psychological Safety and Professional Clarity

Perhaps most importantly: pilots knew where they stood. Expectations were explicit, transparent, defensible. Anxiety focused on performance, not interpretation. A pilot studying for a type rating check ride didn’t wonder “What will the examiner think of my judgment?” They knew exactly: execute this approach within these gates, handle this emergency per this checklist, perform this manoeuvre to this tolerance.

This clarity created psychological safety. Professional identity was built on mastery, not negotiation. When a pilot was failed, they knew why; when passed, they understood why; when upgraded to command, the standards they’d meet didn’t shift based on examiner mood.

This predictability was foundational to trust. Pilots trusted the system because it was transparent. Regulators trusted it because it was defensible. Airlines trusted it because it worked.

Why This System Endured: The Evidence Trail

Task-based training dominated for 50+ years not from tradition, but from results:

| Metric | Period | Result |

| US Airline Fatal Accidents | 1960-1980 | -80% (TBT standardization) |

| Manual Handling Incidents | 1980-2010 | -25% (procedural compliance) |

| Inter-Rater Assessment Agreement | Pre-CBTA | 95% (objective standards) |

| First-Attempt Type Rating Pass | TBT Era | 85-92% (repeatable training) |

| Pilot-Error Accident Rate | 1990-2010 | Steady-to-declining |

These weren’t statistics celebrating mediocrity; they reflected genuine safety achievement. Aviation became the safest form of mass transport precisely because task-based systems ensured consistency, eliminated ambiguity, and prioritized technical mastery as non-negotiable.

Why It Wasn’t Primitive: A Defence Against Dismissal

Contemporary critiques of task-based training sometimes imply it was crude, incomplete, or a necessary stepping stone to “better” methods. This is historically inaccurate and analytically unfair.

Task-based training was not primitive; it was fit-for-purpose. It solved the problems aviation faced with the tools and knowledge available. It created the safest transportation system humanity has built. It scaled without dilution, maintained standards across continents, and produced pilots capable of handling catastrophic failures with composure and skill.

The question isn’t whether task-based training failed; it didn’t. The question, explored in subsequent sections, is whether the environment it was designed for remained unchanged, and if not, at what cost.

That realization; the recognition that the world had shifted while training logic stalled, marks the true inflection point in this narrative.

The Environment Begins to Change: From Sufficiency to Shortfall

Automation Redefined the Pilot’s Role: From Actor to Supervisor

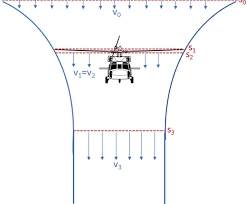

The transformation was quiet, almost invisible, but total. Modern transport aircraft didn’t ask pilots to fly; they asked pilots to supervise flying. Flight paths flowed through modes and automatics, not muscles and instinct. The pilot’s hands touched controls rarely; their minds managed complexity constantly.

This shift exposed a critical gap in task-based logic. A pilot could execute every manoeuvre flawlessly in simulation; perfect ILS approach, smooth go-around, textbook engine failure recovery, yet remain profoundly vulnerable in the automated cockpit. Why? Because automation introduced failure modes task-based training never anticipated:

- Automation surprise. The system behaved unexpectedly; the pilot, unprepared, froze.

- Mode confusion. Which mode active? The pilot misunderstood, acted on false assumptions.

- Fixation. Focused on one problem, missing the broader picture; classic automation trap.

- Loss of situational awareness. Relying on displays, then displays failed; the pilot, disconnected from reality, couldn’t recover.

These failures didn’t fit task-based boxes. You couldn’t reduce them to measurable tolerances. A pilot’s mode confusion exists in judgment, not in altitude deviation. Fixation isn’t a procedural lapse; it’s a cognitive vulnerability. Traditional assessment frameworks had no language for these threats, much less methods to evaluate them.

Yet accidents increasingly revealed exactly these patterns.

Accidents No Longer Looked “Technical”: The Unravelling Narrative

Accident investigations began telling a story that task-based training couldn’t explain. Tenerife 1977 (583 dead): aircraft functioned perfectly, procedures existed, yet 583 died; communication failure. Air France 447 (2009): systems available, procedures correct, yet the aircraft descended into ocean; situational awareness collapse and fixation. Asiana 214 (2013): aircraft sound, runway visible, yet pilots crashed; automation addiction, manual flying atrophy, judgment failure under pressure.

The pattern screamed: the pilot wasn’t failing at what was being trained; the pilot was failing at what was being ignored.

Investigations revealed that accidents rarely resulted from:

- Poor handling skills

- Procedural ignorance

- Technical incompetence

Instead, they resulted from:

- Prioritization failures. Wrong threat assessed as critical

- Communication breakdowns. Intent misunderstood, challenge not mounted

- Authority gradient dysfunction. Junior crew hesitant to challenge senior error

- Judgment under pressure. Decisions cascading from misread situations

- Fixation and tunnel vision. Attention locked on wrong problem while aircraft drifted toward disaster

Yet training assessments continued rewarding execution. A pilot flew an approach perfectly in simulation; altitude stable, speed controlled, descent smooth, and received a “pass.” But that same pilot, facing real weather, real fatigue, real time pressure, mishandled ambiguity and died. The simulator pass meant nothing; operational reality killed him.

The disconnect was becoming indefensible.

The Limits of Binary Judgment: What Task-Based Assessment Cannot See

Task-based assessments are binary by architectural design. They answer one question decisively:

Did the pilot meet the standard?

Yes or no. ±100 feet or outside. Stabilized or unstable.

But they cannot answer the questions that increasingly mattered:

- How did the pilot think? Was the decision reasoned or reactive?

- What cues did they notice or miss? Where was their attention?

- How did authority gradient influence decisions? Did they defer incorrectly to rank?

- Would this behaviour scale safely in line operations? Does simulator competence predict real-world resilience?

- How would this pilot behave if the situation unravelled? Can they recover from surprise, adapt to ambiguity, lead under uncertainty?

A stabilized approach flown mechanically; perfect altitude, perfect speed, perfect descent rate, tells us only that the pilot is technically proficient. It tells us nothing about why they flew it that way, what alternatives they considered, or how they’d respond if automation failed mid-approach.

As automation proliferated, this blindness became dangerous. Pilots could look competent in structured, predictable tasks while remaining fragile in unstructured, ambiguous reality.

As a result, training began to feel complete on paper but incomplete in spirit. Checklists were thorough; decision-making was mysterious. Procedures were clear; judgment was opaque.

The Quiet Discomfort Within the System: Incremental Adaptation Meets Structural Limitation

By the late 1990s, this mismatch was widely felt but rarely articulated bluntly. Airlines sensed the gap. Accident investigators documented it. Senior pilots recognized it. Yet the system persisted largely unchanged.

Why? Because addressing behavioral judgment is uncomfortable territory.

Assessing altitude deviation is safe; objective, measurable, defensible. Assessing judgment is subjective; contextual, interpretive, ambiguous. Assessing leadership risks bias, cultural, personality-driven, litigious.

Regulators, understandably, were cautious. Litigation risk loomed: if a pilot failed a “leadership” grade and sued, could the airline defend it? Airlines were wary of subjectivity; pilots sceptical of being judged on “soft” attributes without clear standards.

The industry’s response was incremental, not structural: CRM courses added. LOFT scenarios introduced. Human factors discussed in classrooms if not always in cockpits. Safety culture reinforced. But the assessment spine remained task-centric. The binary judgment persisted.

This was adaptive on the margins but inadequate at the core.

When Incremental Change Was No Longer Enough: The Operational Reckoning

Despite these efforts, incidents continued revealing the same devastating pattern. Technically proficient crews executed procedures correctly, yet outcomes were flawed. Pilots who’d passed every manoeuvre check made catastrophic judgment errors. Airlines who’d invested in CRM training still saw communication breakdowns kill crews.

The industry faced an unavoidable reckoning:

If safety increasingly depends on behaviour and judgment, why do we assess primarily execution?

This wasn’t an attack on traditional training; it was an acknowledgement of its boundaries. Task-based systems excelled at ensuring procedural proficiency; they were blind to behavioral resilience. The old framework hadn’t failed; it had been outpaced by the complexity it was training for.

The feeling of shortfall emerged not from theory or fashion, but from operational reality. Incident reports, accident investigations, and line operations exposed a gap that incremental tweaks couldn’t close.

Something was structurally missing.

The Search for a New Language: Necessity, Not Ideology

The industry began searching for a framework that could:

- Capture non-technical skills (communication, leadership, decision-making)

- Formalize judgement and behaviour in assessable terms

- Integrate human factors authentically into assessment

- Remain defensible and standardized across airlines and geographies

This wasn’t predetermined ideological shift. It was genuine operational necessity. Regulators studied accident patterns. Airlines commissioned training research. Consultants analysed data. The conclusion was consistent: traditional assessment frameworks were insufficient for modern aviation’s safety challenges.

The search didn’t begin with Competency-Based Training and Assessment (CBTA) as a predetermined solution. It began with discomfort, frustration, and a sincere desire to modernize training to match operational reality.

CBTA arrived as the answer to that search; promising exactly what the industry needed: behavioral depth, integrated human factors, and defensible assessment of judgment under pressure.

What followed was enthusiastic, nearly uncritical adoption.

Whether that adoption honoured its promise; or whether it inverted the solution into new problems; is the inquiry that follows in Part II.